When A/B Testing Isn’t Enough: How to Improve Your Results with Deeper Insight

H.B. Duran

Updated on

Apr 1, 2026

When A/B Testing Isn’t Enough: How to Improve Your Results with Deeper Insight

H.B. Duran

Updated on

Apr 1, 2026

When A/B Testing Isn’t Enough: How to Improve Your Results with Deeper Insight

H.B. Duran

Updated on

Apr 1, 2026

A/B testing is one of the most reliable ways to improve marketing performance.

It helps teams compare variations, validate decisions, and optimize campaigns based on real user behavior. Whether you're refining a landing page, testing ad creative, or adjusting messaging, A/B testing gives you a clear way to measure what works.

But even when an A/B test produces a clear winner, there’s often a lingering question:

Why did it work?

Without that answer, optimization becomes harder to scale. You may improve one campaign, but struggle to apply those learnings elsewhere. Over time, that leads to more testing—but not necessarily more understanding.

To get more from A/B testing, you need to look beyond outcomes and understand how users experience your content before they take action.

What A/B Testing Does Well

A/B testing is effective because it focuses on outcomes.

By comparing two versions of a page or asset, you can measure which one performs better based on real user behavior. This allows teams to:

Identify higher-performing variations

Reduce guesswork in decision-making

Continuously improve conversion rates

It’s a practical, data-driven approach—and for many teams, it’s the foundation of optimization.

A/B testing is excellent at measuring what users do.

Where A/B Testing Falls Short

While A/B testing shows you which version performs better, it doesn’t explain what caused the difference.

For example:

Why did users hesitate before clicking?

What made one version easier to understand than another?

Where did confusion or friction occur?

A/B testing captures the final outcome—but not the experience leading up to it.

As a result, optimization can become a cycle of trial and error. You find winners, but the reasoning behind them remains unclear.

A/B testing shows you what changed performance—but not what caused it.

The Blind Spot: Attention Without Context

To fill this gap, many teams turn to attention-based tools like heatmaps or eye tracking.

These tools show where users focus their attention and how they move through a page. That information is useful—but it still leaves room for interpretation.

Consider a simple scenario:

A user spends several seconds focused on a section of your page.

That could mean:

The content is compelling and holding interest

The message is unclear and requires effort to process

The layout creates friction or confusion

From the data alone, it’s impossible to tell.

Attention without context is ambiguous.

The Missing Layer: User Experience

Between what users see and what they do, there’s another layer that often goes unmeasured: their real-time experience.

This includes:

Engagement (how strongly attention is captured)

Cognitive load (how difficult something is to process)

Emotional response (how content feels in the moment)

Focus (how consistently attention is sustained)

These factors influence behavior before a click or conversion ever happens.

When you can measure this layer, A/B testing becomes more than a scoreboard. It becomes a way to understand why one variation works better than another.

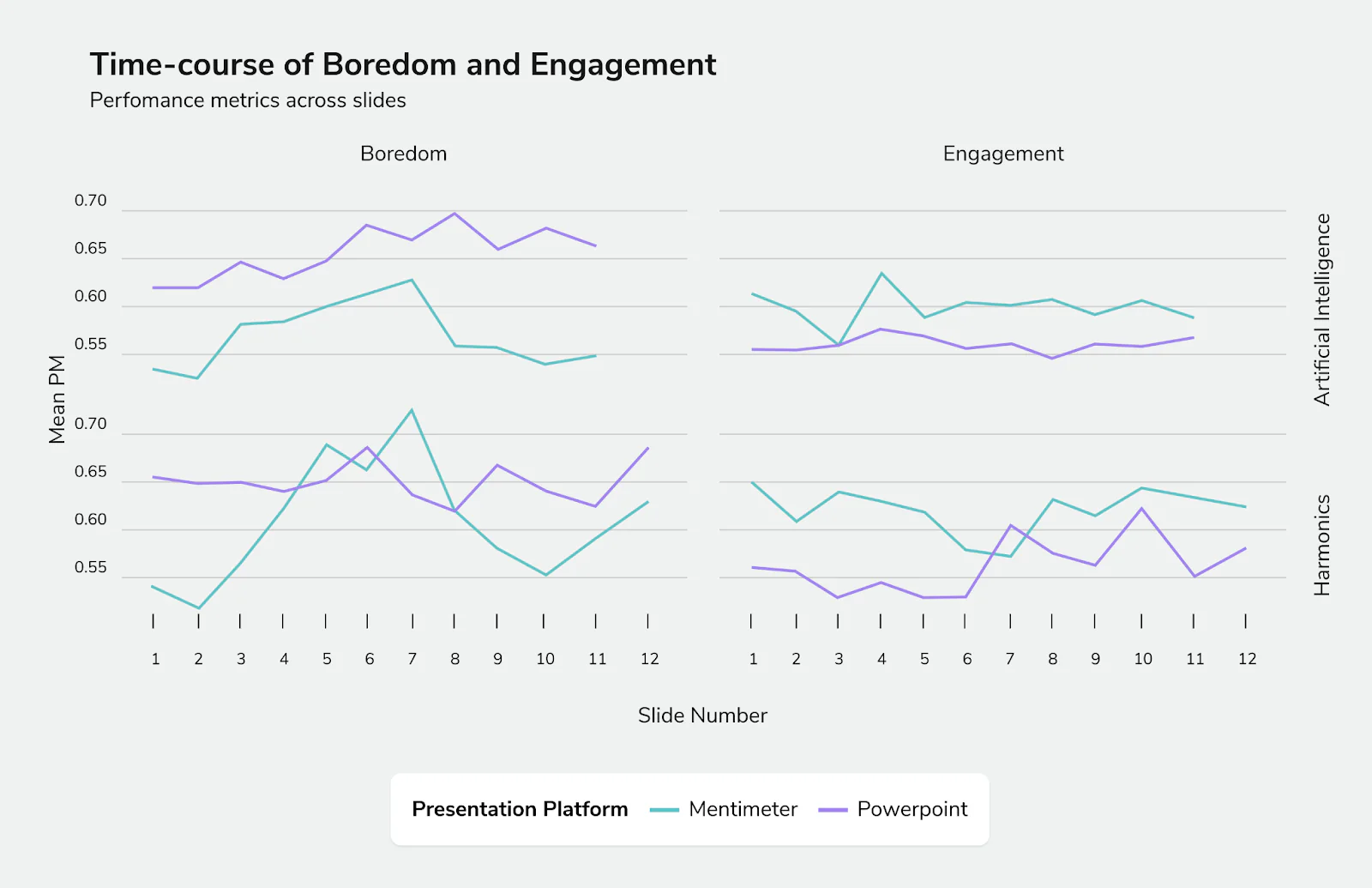

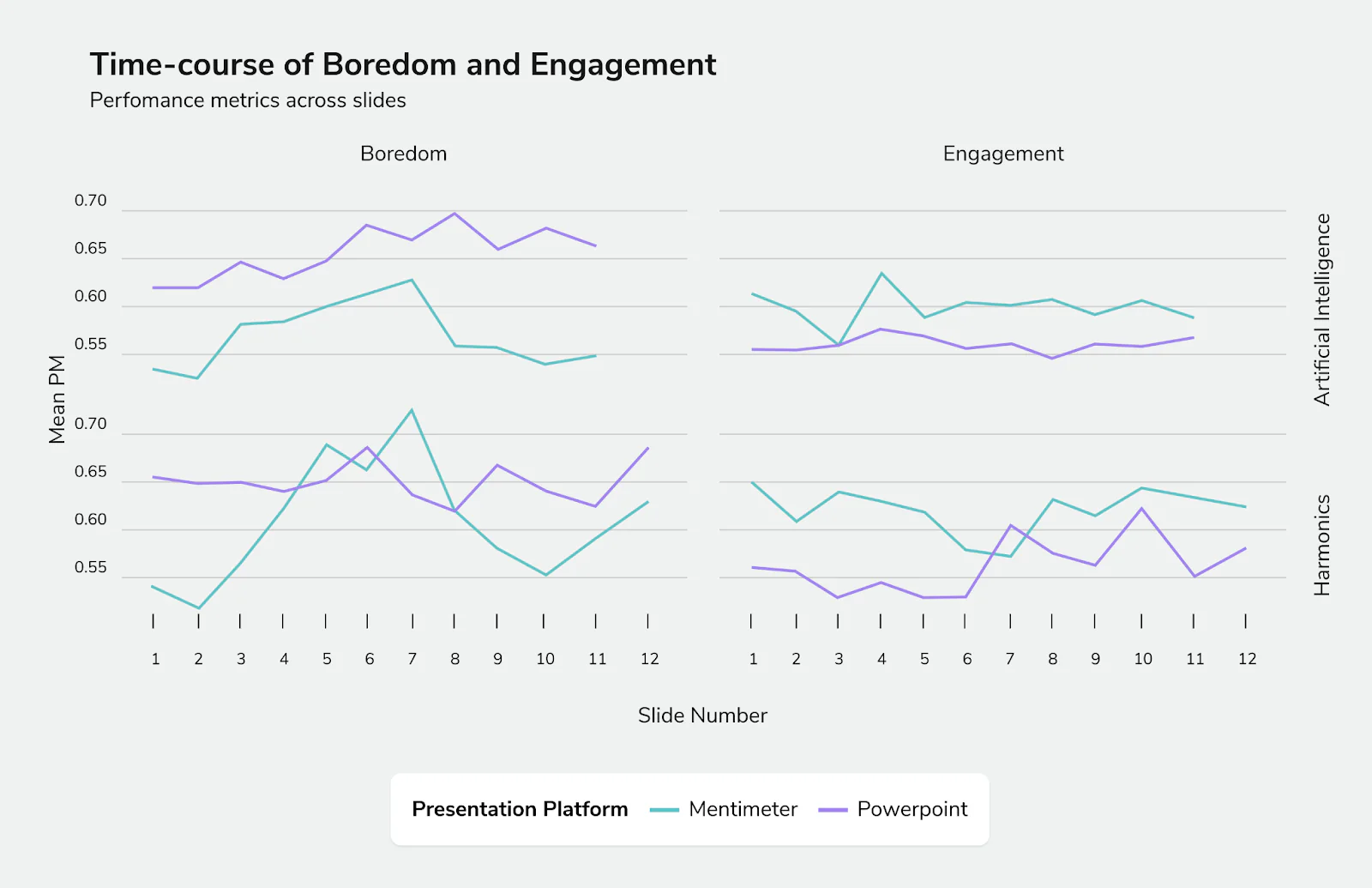

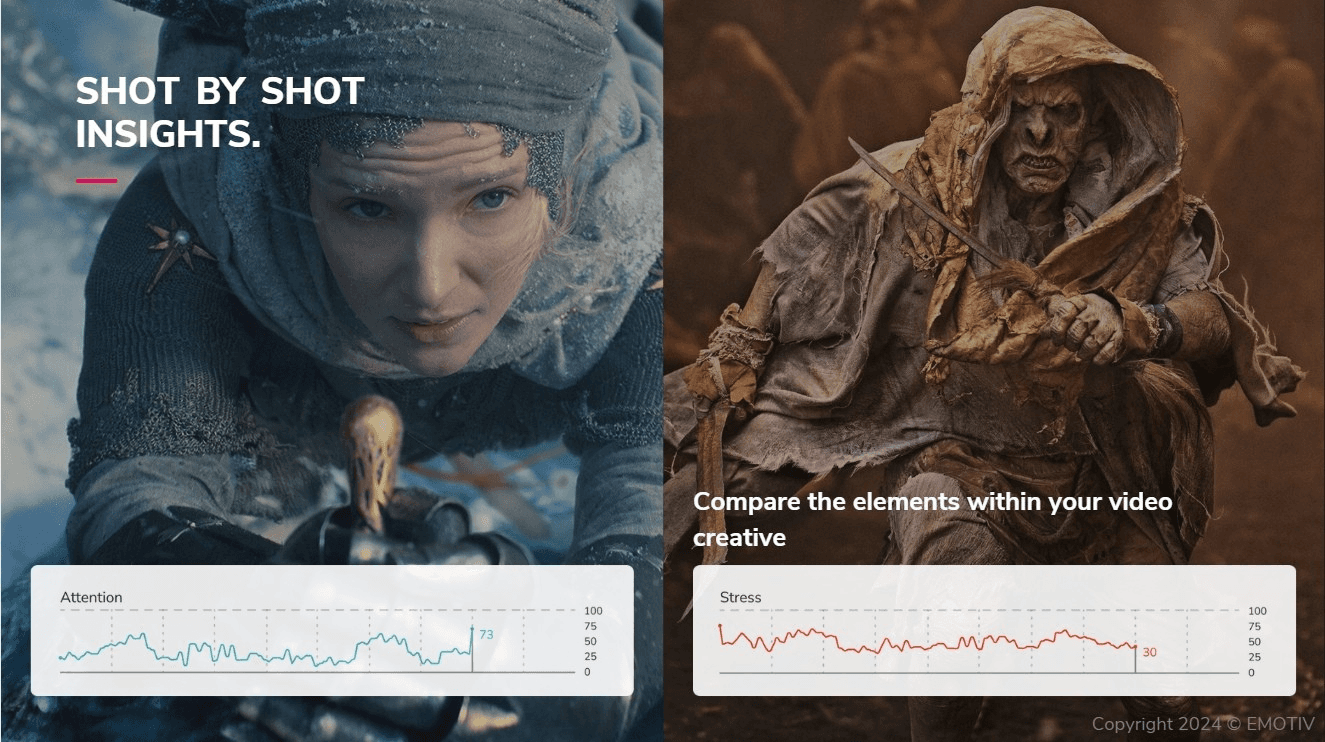

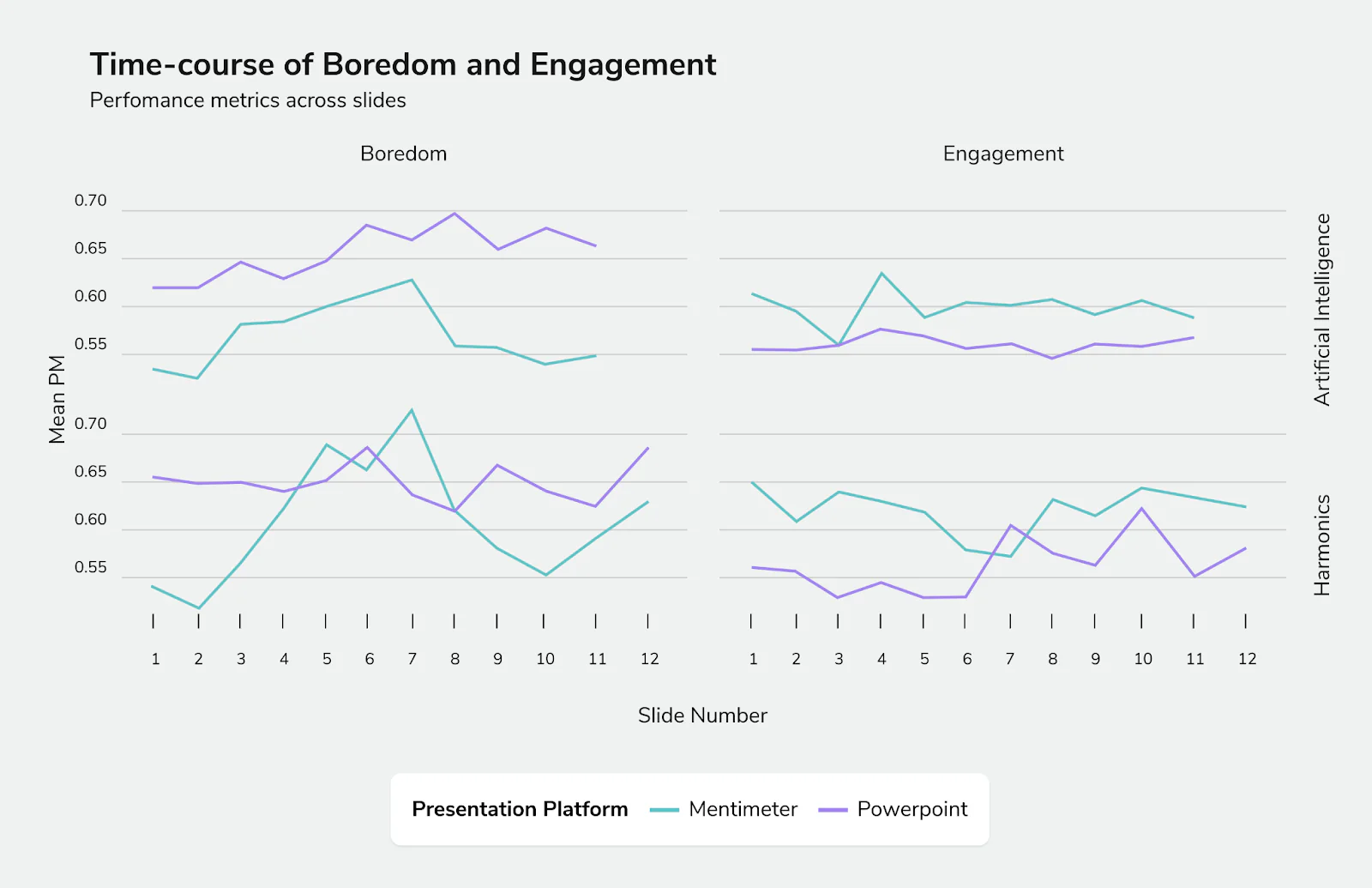

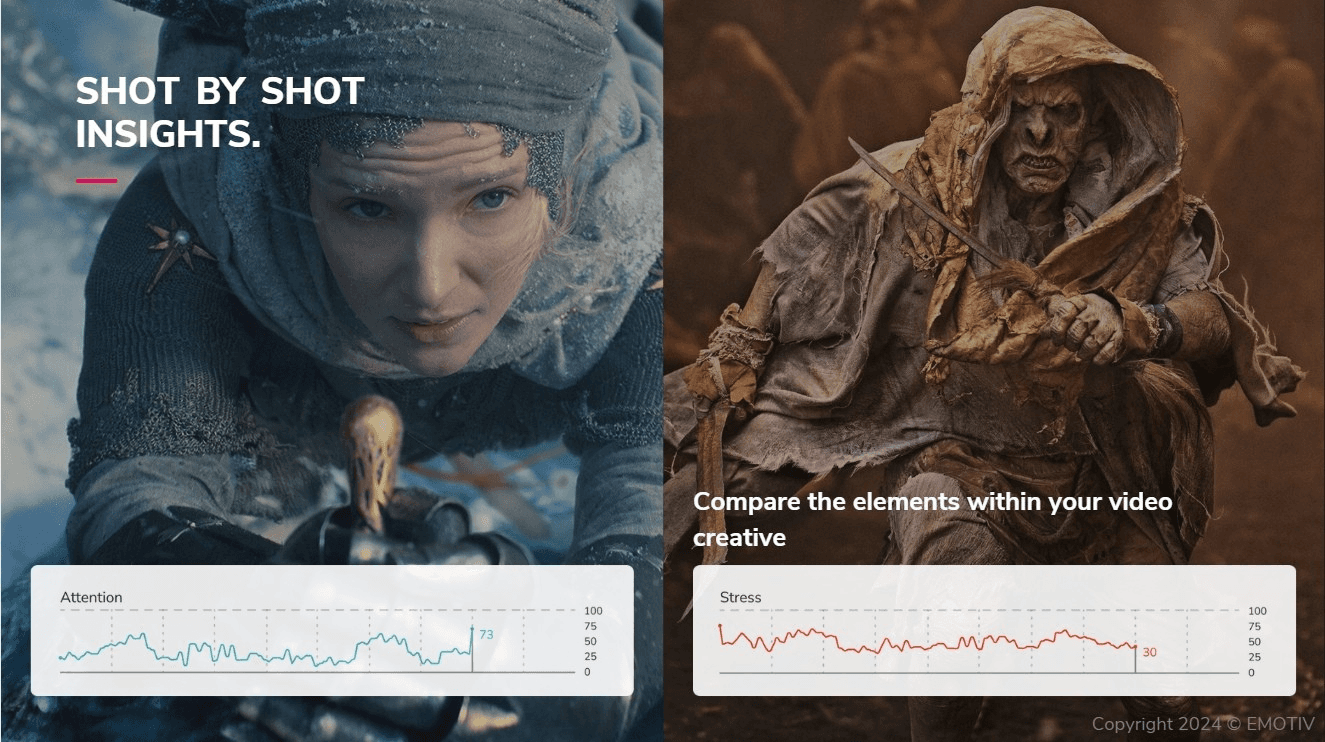

Above: An A/B test conducted with Emotiv technology to directly compare the user experiences between two presentation platforms.

How to Improve A/B Testing with Experience Data

To get more value from A/B testing, you need to pair performance data with insight into user experience.

This is where tools like Emotiv Studio come in.

By measuring brain-based responses in real time, Emotiv Studio translates complex signals into clear, usable metrics such as:

Engagement

Excitement

Stress

Focus

These metrics add context to A/B test results.

Instead of simply knowing which version performed better, you can see how users experienced each version as they interacted with it.

For example:

A version with high engagement and low stress may indicate clarity and interest

A version with high engagement and high stress may suggest confusion or cognitive overload

This additional layer of insight helps explain results—not just measure them.

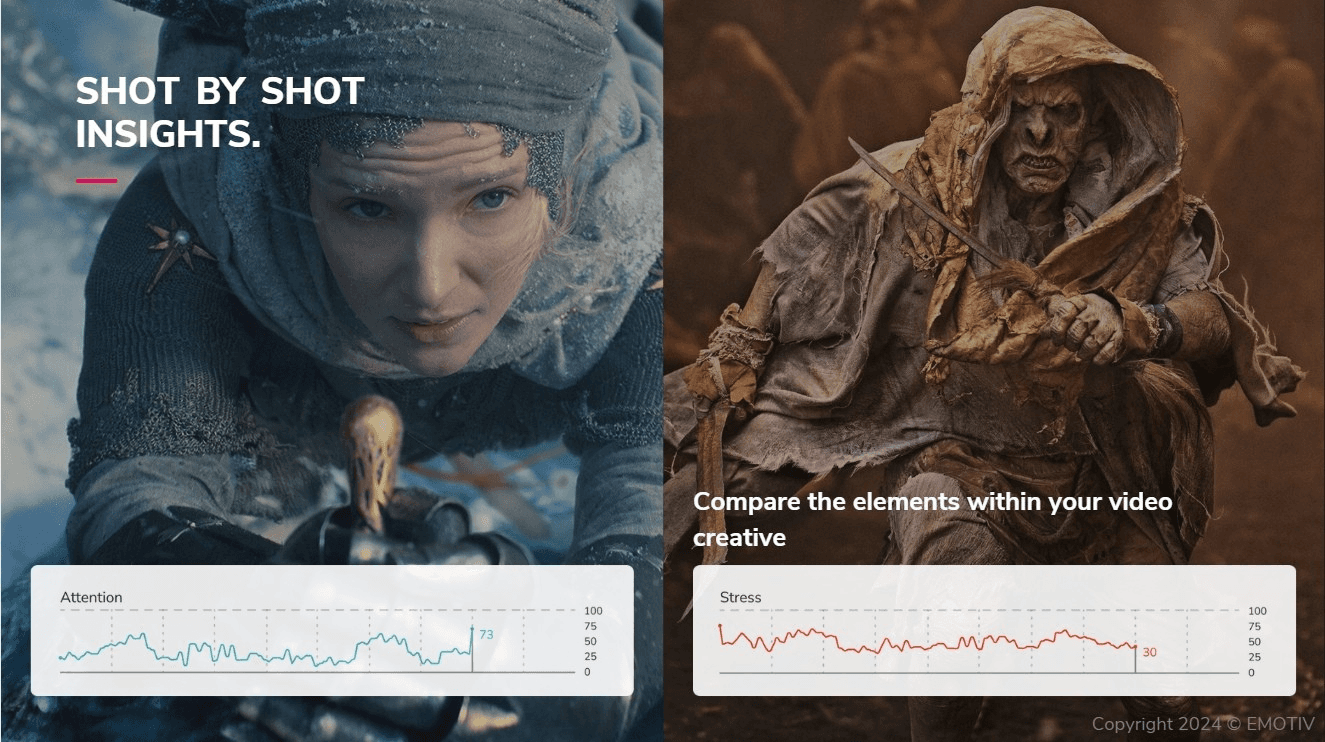

Above: A sample A/B test between TV creative compares two scene edits using Emotiv technology.

A/B Testing vs Other Research Methods

Each research method provides a different type of insight:

Method | What it tells you | Limitation |

A/B testing | Which version performs better | Doesn’t explain why |

Heatmaps / eye tracking | Where users look | No emotional or cognitive context |

Surveys / interviews | What users say | Subject to bias and recall issues |

EEG-based insights | How users experience content | Adds real-time context |

No single method replaces the others. But combining them leads to more informed decisions.

What This Unlocks for Marketers

When you understand how users experience your content, you can improve how you optimize.

This makes it possible to:

Identify friction before it affects performance

Improve clarity in messaging and design

Validate creative decisions with more confidence

Apply learnings across campaigns more effectively

Instead of relying only on outcomes, you gain insight into the factors that drive those outcomes.

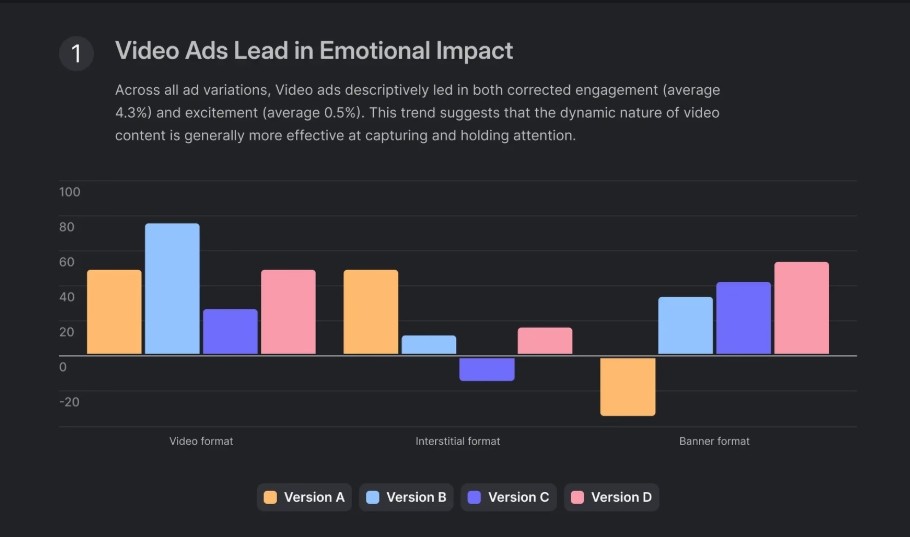

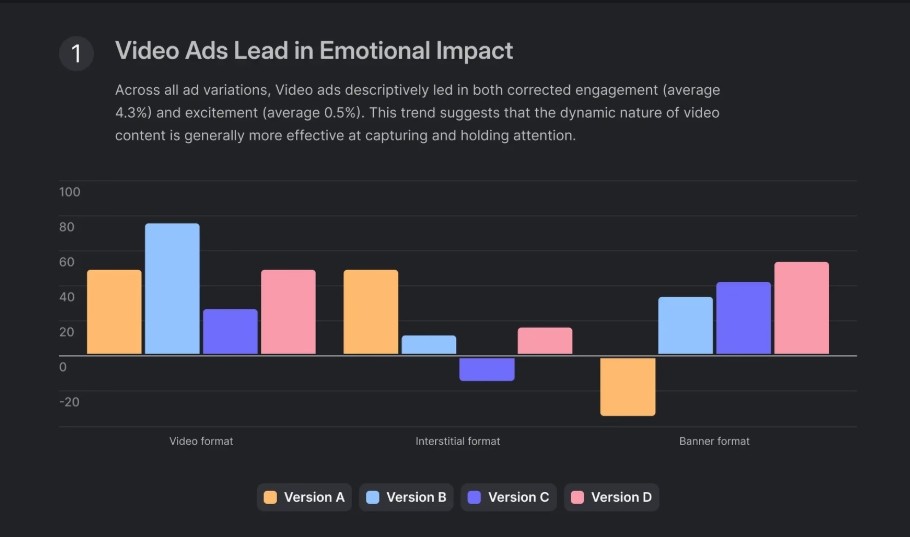

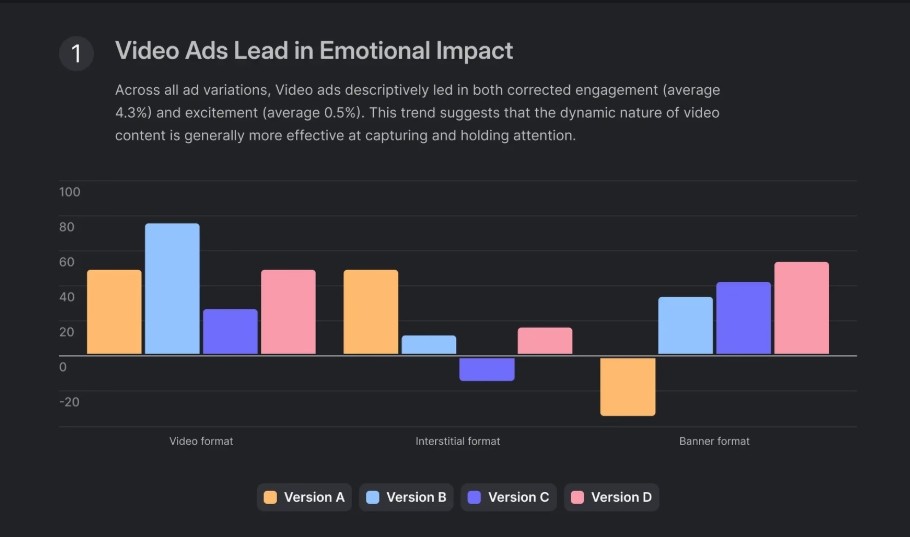

Above: The Emotiv Studio product research dashboard showing the results of an A/B test between ad formats

Go Beyond A/B Testing

A/B testing remains an essential tool. It provides clear, measurable results and supports continuous improvement.

But on its own, it offers an incomplete picture.

By adding insight into how users experience your content, you can make optimization more precise—and more repeatable.

Emotiv Studio makes it possible to capture that missing layer in real time, helping you move from measuring performance to truly understanding it.

See how real-time insight into engagement, focus, and cognitive load can improve your optimization strategy.

Explore Emotiv Studio Features

A/B testing is one of the most reliable ways to improve marketing performance.

It helps teams compare variations, validate decisions, and optimize campaigns based on real user behavior. Whether you're refining a landing page, testing ad creative, or adjusting messaging, A/B testing gives you a clear way to measure what works.

But even when an A/B test produces a clear winner, there’s often a lingering question:

Why did it work?

Without that answer, optimization becomes harder to scale. You may improve one campaign, but struggle to apply those learnings elsewhere. Over time, that leads to more testing—but not necessarily more understanding.

To get more from A/B testing, you need to look beyond outcomes and understand how users experience your content before they take action.

What A/B Testing Does Well

A/B testing is effective because it focuses on outcomes.

By comparing two versions of a page or asset, you can measure which one performs better based on real user behavior. This allows teams to:

Identify higher-performing variations

Reduce guesswork in decision-making

Continuously improve conversion rates

It’s a practical, data-driven approach—and for many teams, it’s the foundation of optimization.

A/B testing is excellent at measuring what users do.

Where A/B Testing Falls Short

While A/B testing shows you which version performs better, it doesn’t explain what caused the difference.

For example:

Why did users hesitate before clicking?

What made one version easier to understand than another?

Where did confusion or friction occur?

A/B testing captures the final outcome—but not the experience leading up to it.

As a result, optimization can become a cycle of trial and error. You find winners, but the reasoning behind them remains unclear.

A/B testing shows you what changed performance—but not what caused it.

The Blind Spot: Attention Without Context

To fill this gap, many teams turn to attention-based tools like heatmaps or eye tracking.

These tools show where users focus their attention and how they move through a page. That information is useful—but it still leaves room for interpretation.

Consider a simple scenario:

A user spends several seconds focused on a section of your page.

That could mean:

The content is compelling and holding interest

The message is unclear and requires effort to process

The layout creates friction or confusion

From the data alone, it’s impossible to tell.

Attention without context is ambiguous.

The Missing Layer: User Experience

Between what users see and what they do, there’s another layer that often goes unmeasured: their real-time experience.

This includes:

Engagement (how strongly attention is captured)

Cognitive load (how difficult something is to process)

Emotional response (how content feels in the moment)

Focus (how consistently attention is sustained)

These factors influence behavior before a click or conversion ever happens.

When you can measure this layer, A/B testing becomes more than a scoreboard. It becomes a way to understand why one variation works better than another.

Above: An A/B test conducted with Emotiv technology to directly compare the user experiences between two presentation platforms.

How to Improve A/B Testing with Experience Data

To get more value from A/B testing, you need to pair performance data with insight into user experience.

This is where tools like Emotiv Studio come in.

By measuring brain-based responses in real time, Emotiv Studio translates complex signals into clear, usable metrics such as:

Engagement

Excitement

Stress

Focus

These metrics add context to A/B test results.

Instead of simply knowing which version performed better, you can see how users experienced each version as they interacted with it.

For example:

A version with high engagement and low stress may indicate clarity and interest

A version with high engagement and high stress may suggest confusion or cognitive overload

This additional layer of insight helps explain results—not just measure them.

Above: A sample A/B test between TV creative compares two scene edits using Emotiv technology.

A/B Testing vs Other Research Methods

Each research method provides a different type of insight:

Method | What it tells you | Limitation |

A/B testing | Which version performs better | Doesn’t explain why |

Heatmaps / eye tracking | Where users look | No emotional or cognitive context |

Surveys / interviews | What users say | Subject to bias and recall issues |

EEG-based insights | How users experience content | Adds real-time context |

No single method replaces the others. But combining them leads to more informed decisions.

What This Unlocks for Marketers

When you understand how users experience your content, you can improve how you optimize.

This makes it possible to:

Identify friction before it affects performance

Improve clarity in messaging and design

Validate creative decisions with more confidence

Apply learnings across campaigns more effectively

Instead of relying only on outcomes, you gain insight into the factors that drive those outcomes.

Above: The Emotiv Studio product research dashboard showing the results of an A/B test between ad formats

Go Beyond A/B Testing

A/B testing remains an essential tool. It provides clear, measurable results and supports continuous improvement.

But on its own, it offers an incomplete picture.

By adding insight into how users experience your content, you can make optimization more precise—and more repeatable.

Emotiv Studio makes it possible to capture that missing layer in real time, helping you move from measuring performance to truly understanding it.

See how real-time insight into engagement, focus, and cognitive load can improve your optimization strategy.

Explore Emotiv Studio Features

A/B testing is one of the most reliable ways to improve marketing performance.

It helps teams compare variations, validate decisions, and optimize campaigns based on real user behavior. Whether you're refining a landing page, testing ad creative, or adjusting messaging, A/B testing gives you a clear way to measure what works.

But even when an A/B test produces a clear winner, there’s often a lingering question:

Why did it work?

Without that answer, optimization becomes harder to scale. You may improve one campaign, but struggle to apply those learnings elsewhere. Over time, that leads to more testing—but not necessarily more understanding.

To get more from A/B testing, you need to look beyond outcomes and understand how users experience your content before they take action.

What A/B Testing Does Well

A/B testing is effective because it focuses on outcomes.

By comparing two versions of a page or asset, you can measure which one performs better based on real user behavior. This allows teams to:

Identify higher-performing variations

Reduce guesswork in decision-making

Continuously improve conversion rates

It’s a practical, data-driven approach—and for many teams, it’s the foundation of optimization.

A/B testing is excellent at measuring what users do.

Where A/B Testing Falls Short

While A/B testing shows you which version performs better, it doesn’t explain what caused the difference.

For example:

Why did users hesitate before clicking?

What made one version easier to understand than another?

Where did confusion or friction occur?

A/B testing captures the final outcome—but not the experience leading up to it.

As a result, optimization can become a cycle of trial and error. You find winners, but the reasoning behind them remains unclear.

A/B testing shows you what changed performance—but not what caused it.

The Blind Spot: Attention Without Context

To fill this gap, many teams turn to attention-based tools like heatmaps or eye tracking.

These tools show where users focus their attention and how they move through a page. That information is useful—but it still leaves room for interpretation.

Consider a simple scenario:

A user spends several seconds focused on a section of your page.

That could mean:

The content is compelling and holding interest

The message is unclear and requires effort to process

The layout creates friction or confusion

From the data alone, it’s impossible to tell.

Attention without context is ambiguous.

The Missing Layer: User Experience

Between what users see and what they do, there’s another layer that often goes unmeasured: their real-time experience.

This includes:

Engagement (how strongly attention is captured)

Cognitive load (how difficult something is to process)

Emotional response (how content feels in the moment)

Focus (how consistently attention is sustained)

These factors influence behavior before a click or conversion ever happens.

When you can measure this layer, A/B testing becomes more than a scoreboard. It becomes a way to understand why one variation works better than another.

Above: An A/B test conducted with Emotiv technology to directly compare the user experiences between two presentation platforms.

How to Improve A/B Testing with Experience Data

To get more value from A/B testing, you need to pair performance data with insight into user experience.

This is where tools like Emotiv Studio come in.

By measuring brain-based responses in real time, Emotiv Studio translates complex signals into clear, usable metrics such as:

Engagement

Excitement

Stress

Focus

These metrics add context to A/B test results.

Instead of simply knowing which version performed better, you can see how users experienced each version as they interacted with it.

For example:

A version with high engagement and low stress may indicate clarity and interest

A version with high engagement and high stress may suggest confusion or cognitive overload

This additional layer of insight helps explain results—not just measure them.

Above: A sample A/B test between TV creative compares two scene edits using Emotiv technology.

A/B Testing vs Other Research Methods

Each research method provides a different type of insight:

Method | What it tells you | Limitation |

A/B testing | Which version performs better | Doesn’t explain why |

Heatmaps / eye tracking | Where users look | No emotional or cognitive context |

Surveys / interviews | What users say | Subject to bias and recall issues |

EEG-based insights | How users experience content | Adds real-time context |

No single method replaces the others. But combining them leads to more informed decisions.

What This Unlocks for Marketers

When you understand how users experience your content, you can improve how you optimize.

This makes it possible to:

Identify friction before it affects performance

Improve clarity in messaging and design

Validate creative decisions with more confidence

Apply learnings across campaigns more effectively

Instead of relying only on outcomes, you gain insight into the factors that drive those outcomes.

Above: The Emotiv Studio product research dashboard showing the results of an A/B test between ad formats

Go Beyond A/B Testing

A/B testing remains an essential tool. It provides clear, measurable results and supports continuous improvement.

But on its own, it offers an incomplete picture.

By adding insight into how users experience your content, you can make optimization more precise—and more repeatable.

Emotiv Studio makes it possible to capture that missing layer in real time, helping you move from measuring performance to truly understanding it.

See how real-time insight into engagement, focus, and cognitive load can improve your optimization strategy.